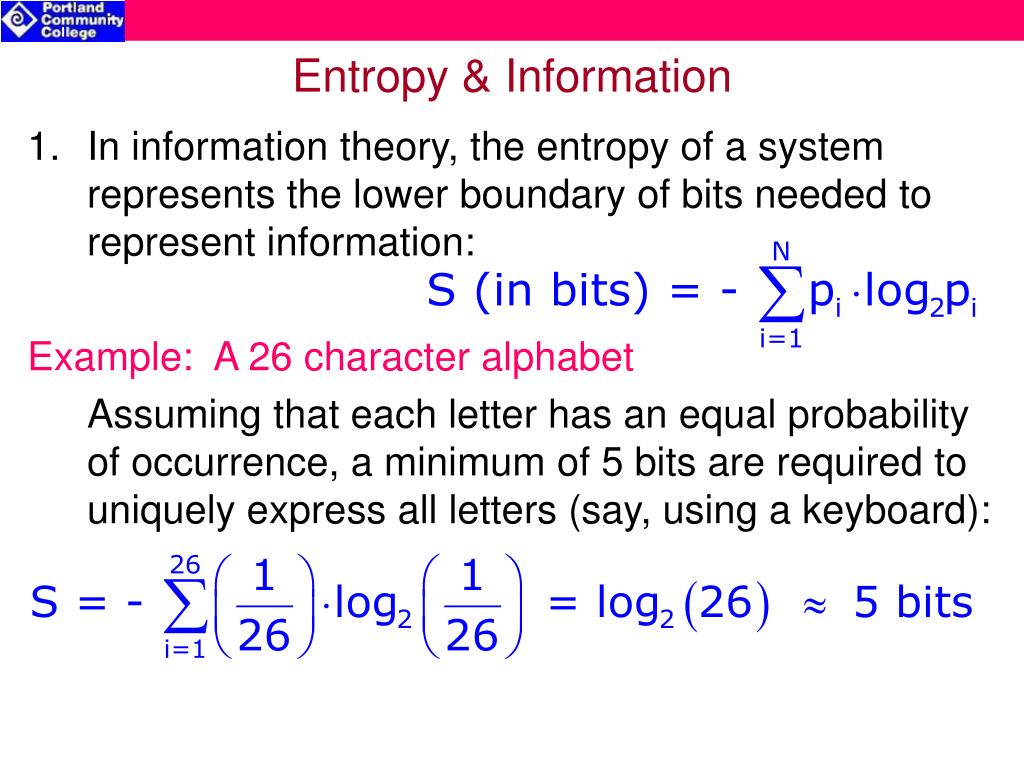

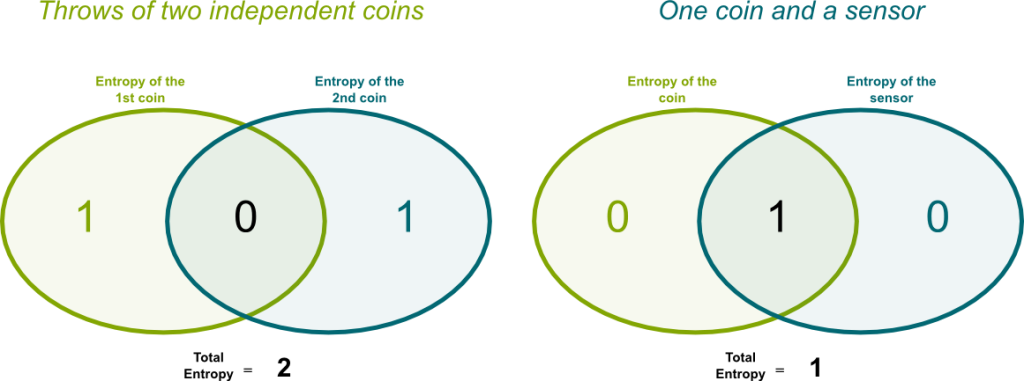

Let’s visualize the natural log, \(\log_e\), log base 2, \(\log_2\) and log base 10, \(\log_\) This makes sense, since if there is no chance of observing a value/state, then it is like the value/state does not even exist. \(\log p(x_i) = \log 0\) is simply discarded. In fact, in practice, if \(p(x_i) = 0\), then in many applications Thus, \(\log x\) is always negative, that is why after we sum over negatives, we add a negative at the front to convert \(H(X)\) to positive. If you recall, \(\log x\), where the domain is \(x \in \), has the range \(\). \(p(x_i)\) is the probability of the i-th value of \(X\) When the state probabilities are all equal, then there is maximal entropy when one state is only possible, then there is minimal entropy.īefore we compute an example for each of this situations, let’s see how entropy is defined. The distribution of the states/values of weather is called the probability mass function. Each state takes on a probability such that adding all these probabilities must sum to 1. There is high uncertainty or a high level of surprise when the states of theĪs you can see, weather is a categorical variable having 3 states sunny, cloudy or rainy. However, when the weather can be equally sunny, cloudy or rainy, then it is very difficult to know and/or guess what the weather will be for all states are equally probable. If it is always sunny and never cloudy or rainy, then the weather is said to be not surprising or having very little uncertainty.

Let’s say the weather can be in any number of states: sunny, cloudy, rainy. But what is surprise? Surprise can be defined as the level of uncertainty if knowing something is highly uncertain, then there is a lot of surprise to be expected. From a lay perspective, entropy is the magnitude of surprise when something has a high level of surprise, then it has high entropy. Entropy is understood in a couple of different ways. Let’s talk about entropy and mutual information. Dynamic Bayesian Networks, Hidden Markov Models Differential Diagnosis of COVID-19 with Bayesian Belief Networks Recurrent Neural Network (RNN), Classification Stochastic Gradient Descent for Online Learning Iteratively Reweighted Least Squares Regression Safe and Strong Screening for Generalized LASSO Estimating Standard Error and Significance of Regression Coefficients Data Discretization and Gaussian Mixture Models Iterative Proportional Fitting, Higher Dimensions Precision-Recall and Receiver Operating Characteristic Curves Conditional Mutual Information for Gaussian Variables Mutual Information for Gaussian Variables Conditional Multivariate Gaussian, In Depth Conditional Multivariate Normal Distribution

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed